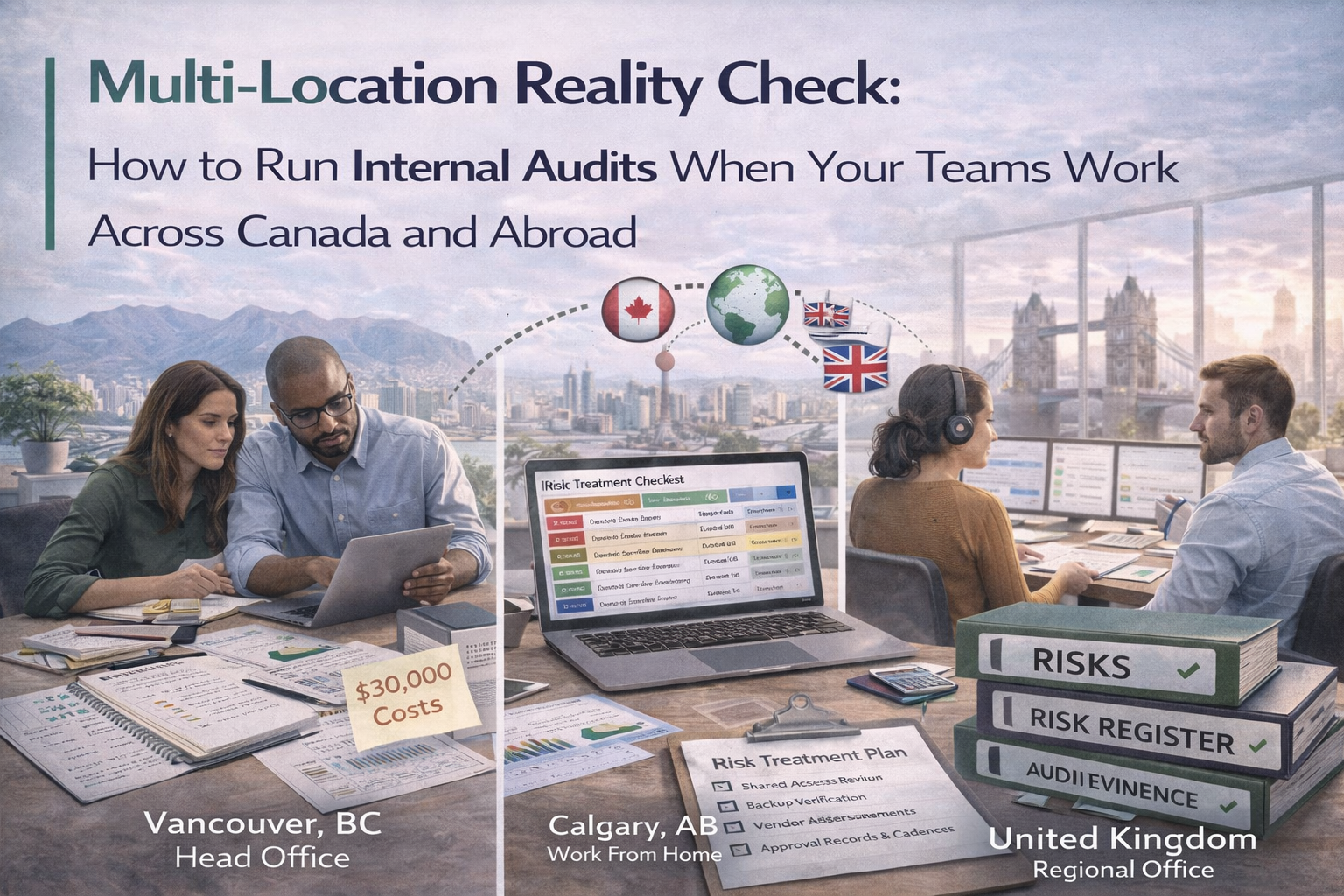

A practical guide to running internal audits for distributed teams with consistent evidence, sampling, and audit-ready processes across multiple locations.

If your teams work across Canada and abroad, internal audits can quickly turn into missed interviews, inconsistent evidence, unclear ownership, and follow-up loops that never close. Those problems are real, but they are usually process problems, not geography problems.

This playbook shows how to run strong internal audits across remote engineers, offshore teams, regional operations, and multiple time zones without flying people around or letting the audit lose control.

Most distributed audit problems are actually process failures. Once those are fixed, location matters a lot less than teams assume.

Fix those five issues, and distributed audits become much more predictable.

The goal is not separate audits by country. The goal is one internal audit program with shared criteria, consistent sampling, local evidence lanes, and central reporting.

| Role | Responsibilities |

|---|---|

| Hub: central audit function | Owns the audit plan and sampling rules, runs interviews, writes the report, logs findings, and tracks corrective action verification. |

| Spokes: local control owners | Provide evidence packs, attend interviews, execute corrective actions, and attach closure proof. |

This model keeps one standard without removing local accountability.

Some controls should be tested once because they are global by design. Others need regional sampling because execution changes by office, team, or geography.

Multi-location audits need defensible sampling. Without that, the whole exercise feels arbitrary.

If each region sends proof in its own format, internal audits turn into file hunting. The fix is simple: quarterly evidence packs stored in your ISMS SharePoint, with location tagging built in.

| Minimum evidence pack folders | What they help with |

|---|---|

| Access Reviews | Identity and privilege sampling |

| Logging and Monitoring Reviews | Control operation proof by period |

| Vulnerability and Patch | Regional remediation evidence |

| Change Samples | Execution testing across teams |

| Backup and Restore Tests | Recoverability evidence |

| Incident Response and Tabletops | Preparedness and lessons learned |

| Vendor Reviews | Third-party governance by period |

| Internal Audit and CAPA | Findings and closure evidence |

| Management Review | Decision and oversight records |

Add one metadata field for location. That can be Canada-HQ, Canada-West, Canada-East, Abroad-EU, Abroad-APAC, or whatever naming convention fits your organization. Once location is tagged, auditors can filter instantly instead of guessing where evidence belongs.

Distributed interviews usually fail when they are too informal. A scripted interview format works better because it keeps every location on the same standard.

Do not try to do everything live on calls. A strong multi-location audit asks teams to upload evidence 48 to 72 hours before the interview, along with a checklist of what will be reviewed. Then the interview is used for clarification, not document collection.

Distributed audits often fail at closure time. To fix that, define a real closure rule for every finding.

Add a verification plan to every action. For example: re-check the next quarter’s access review, re-sample three critical patches next month, or confirm a vendor review record exists before renewal. That is how repeat findings stop spreading across locations.

Whether your framework is ISO 27001 or SOC 2, your internal audit output should be followable, not bloated.

| Cadence | What it looks like |

|---|---|

| Monthly micro-audit | Sample 10 controls, rotate across 2 locations, keep the session to 60 to 90 minutes total. |

| Quarterly structured internal audit | Broader sampling, refreshed management review inputs, updated vendor reviews, and optionally one tabletop or DR exercise record. |

This rhythm keeps evidence green all year without burning out distributed teams.

Multi-location internal audits do not fail because teams are far apart. They fail because the audit design is loose. When criteria are shared, evidence is standardized, interviews are scripted, and closure is verified, distance stops being the main problem.

That is how distributed teams stay audit-ready without turning every cycle into a scramble.